Per Statista, "The total amount of data created, captured, copied, and consumed globally is forecast to increase rapidly, reaching 64.2 zettabytes in 2020. Over the next five years up to 2025, global data creation is projected to grow to more than 180 zettabytes."

In a hybrid business world, data has become the essential commodity of trade, growth, and success. Demographically scattered access, decreased tolerance to poor performance, and an increased need for scalability has put disk space monitoring at the core of many organizations' server management strategies.

The same report from Statista says, "In line with the strong growth of the data volume, the installed base of storage capacity is forecast to increase, growing at a compound annual growth rate of 19.2 percent over the forecast period from 2020 to 2025."

This rise in data usage volume has made monitoring disk utilization important for organizations to ensure disk space availability, minimize the risk of unforeseen downtime, and prevent latency in servers. A disk space monitor can help you set monitoring thresholds, and trigger alerts when disk space availability is beyond set thresholds, providing end users with uninterrupted productivity and helping admins forecast and perform disk capacity planning, upgrades, and maintenance.

However, monitoring disk space on an enterprise level requires more than just staring at performance graphs and numbers. You need to focus on a long-term business strategy that considers all the interconnecting and underlying factors for disk space consumption. Along with that, you also need a deeper understanding of your business operations to have a unified view on the disk utilization in your network.

The complexity of this process results in some challenges that lead to adverse effects in the long run if not tackled properly. Below discussed are the major challenges in disk space monitoring.

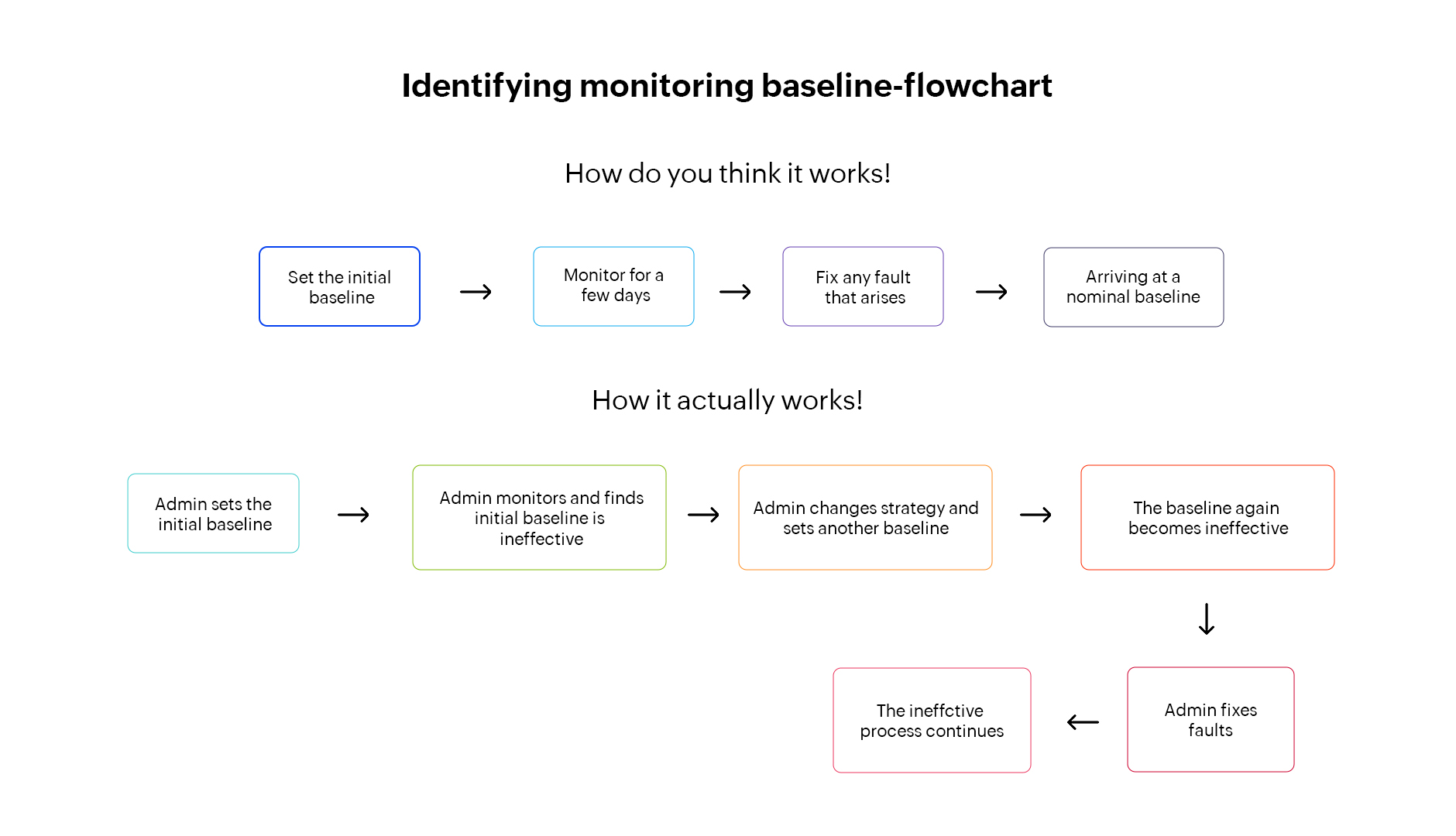

Identifying the nominal disk space usage baseline for a server or any device is difficult since the ideal disk utilization constantly changes. The disk utilization level that you consider safe today may put your network at risk tomorrow. Disk space usage changes with respect to different business scenarios such as the frequency of usage, the usage pattern of end users, and the type of server (local or global).

For instance, consider an e-commerce business where the nominal disk utilization baseline on a SQL server is set to 70% by default. But when there's a flash sale running on specific days or for specific products, the number of sign-ups and orders will shoot up.

Technically, the rise in I/O requests to the server impacts disk utilization, causing the server's disk usage to breach the baseline during the sale and return below the baseline after the sale. This can cause I/O latency and affect network performance.

Hence, before setting a baseline, you need to:

Tip: To manage the sudden excess data load on the server, you can set the threshold value slightly below the nominal baseline so you can be ready anytime.

Disk space is not a homogeneous utility. It's influenced by other associated factors such as issues in applications, processes, and services that run on a server that can eventually lead to abnormal disk space consumption.

Wondering how? Let's again go through an example. When you run a high-end application on a server that has errors or bugs, it consumes more disk space than usual in order to run smoothly. This can quickly increase disk space usage. In this case, performing manual device cleanup or expanding disk space won't help because the outages aren't due to disk space issues but the false spike produced by the processes or applications running on the devices. Unless and until you fix bugs or stop the application from running on the device, you can't solve the issue.

Given that, monitoring disk space alone isn't sufficient. In case of faults, you might miss out on associated factors and end up arriving at the wrong conclusion that the outages are due to insufficient disk space.

To overcome this challenge, you need to:

Organizations that run high performance applications for their business functions usually operate in an accordingly high disk utilization environment. This requires network admins to monitor the disk space of network devices constantly and take appropriate actions when unusual usage patterns arise, including upgrading hardware equipment.

When it comes to hardware upgrades, one of the biggest challenges is ensuring consistency in the hardware configurations between the on-field system and the monitoring tool. This is because there's often a communication gap between the on-field team and the monitoring team, which disrupts their productivity and increases the mean time to resolve (MTTR) when a fault occurs.

Let's say the on-field hardware team upgrades the hard disk for devices X, Y, and Z and forgets to inform the monitoring team. If devices X, Y, and Z then send out alerts on disk space issues, the network admin won't know the newly updated disk capacity. The admin takes some time to resolve the issue before finding out that the disk is already upgraded and the disk space is not a matter of concern.

To avert this, you should establish a system to:

Servers may experience increased disk usage due to the malfunction or failure of other devices to which the host server is topographically interconnected, such as routers or switches. Determining whether the outages are due to insufficient disk space or the failure of dependent devices requires a much larger perspective that comes at the cost of time, money, and effort. But paying that price can prevent downtime and potential business loss that might occur due to excessive disk space consumption.

Simply put, since the server's disk performance also relies on its dependent devices, any malfunctions in the devices will eventually affect the server's disk utilization. When you know which devices are dependent on servers and vice versa, you'll get a clear picture of the network architecture and find the source of performance bottlenecks.

Hence, for a successful disk monitoring strategy, you need to:

Analyzing how disk space consumption changes with business trends helps network admins set disk space monitoring thresholds for a shorter period, but it doesn't help in the long run due to the dynamic nature of disk space usage.

For example, you might have set the threshold for a server's disk based on the disk space usage pattern in a particular week. But the usage pattern may not be the same in the following week or after a few months. For instance, users may access the database more frequently on weekdays and less on the weekend, run critical processes during the first quarter and low priority tasks moving forward, etc. This varies from business to business, contributing to disk data growth trends.

Here, determining the ideal monitoring baseline is difficult due to a lack of long-term focus on growth trends. For long-term analysis:

Here's a quick recap of the disk space monitor challenges we covered and their solutions:

On a final note, we recommend that you use a comprehensive tool that can easily overcome all these challenges and enhance your disk space monitoring experience. One such tool is ManageEngine OpManager!

OpManager is a powerful and easy-to-use disk space monitoring solution with over 230 dashboard widgets to monitor specific disk space performance metrics, including Disk read requests, Used Disk Space in GB, Disk Write Latency, Disk I/O Usage. You can use OpManager as a disk space monitoring tool for Windows, Linux, Unix, and Solaris servers. Leveraging OpManager's AI-based adaptive thresholds, IT admins across the globe have been able to drastically reduce the manual effort involved in proactive disk space monitoring, thereby optimizing disk utilization practices and creating an effective network infrastructure.

Learn more about OpManager's advanced disk space monitor here.

New to OpManager? Schedule a personalized demo or download our 30-day, free trial to explore how OpManager can simplify your disk space monitoring tasks!